This is a sub-section of my posts describing the Sustainable Energy Design 3 course group project to Design and Build a Micro Wind Turbine – for more information see here

Charging and Discharging Batteries

We are charging 12V leisure lead acid battery. The two biggest concerns in charging a lead acid battery are:

- Overcharging – this causes sulfation in the chemicals which damage the battery in the long term and reduces its capacity

- Undercharging – inefficient and means wasting lots of power as heat dissipated in resistors which means cooling might be needed

However, at what values the battery overcharges and undercharges changes not just battery to battery but also mostly with the state of charge (SOC) whilst the battery is charging and discharging.

There are different types of charging systems to accommodate for these factors:

- Unregulated (transformer-based) chargers – cheapest chargers, wall mount transformer and a diode, deliver 13 to 14 volts over a reasonable current range, but when the current tapers off, the voltage raises to 15, 16, 17, even 18 volts

- Regulated Taper chargers – Either constant voltage or constant current is applied to the battery through a combination of transformer, diode, and resistance, don’t let the voltage climb higher than the trickle charge voltage

- Constant-current chargers – maintains a constant current below the minimum safe amount

- Constant-voltage chargers – A circuit that is set for the maximum allowable charge voltage, but has a current limit to control the initial absorption current can produce a very nice charger

- Fast chargers – measures the SOC and changes supply parameters to always achieve the maximum possible, without overcharging for that SOC

By controlling our BMS via software this enables us the flexibility to trial and implement different possible systems to find an ideal solution for our design. Ideally we will implement an effective fast charging system but we can also just program a backup plan of regulated taper charging if none of the algorithms appear to be working. To do this we will need to measure the SOC and have a method to change the supply parameters accordingly.

Lead Acid Batteries

Lead acid batteries generally need a minimum of 2.15V per cell to charge, which is about 12.9V for a 12V battery, as a result of the internal battery chemistry. Normally a 12V battery would use voltages up to 14.1V, but deep discharge cycling mode allows for up to 14.7V to get the a faster charge rate, but this is only possible if the voltage only drops to a ‘float voltage’ when it is fully charged (Power Stream, n.d.). Generally the float voltage of most flooded lead acid batteries is 2.25V to 2.27V/cell (Battery University, n.d.).

Sealed lead acid batteries are generally advised to not be charged at a rate higher than C/3 (where C is the Amp-hours capacity of the battery) which in our case with C = 10Ah specified implies a charging rate less than approximately 3Ah. (Power Stream, n.d.)

This is both to stop overcharging but also to limit the effects of it, as overcharging that may occur during a generally constant current rate up to the C/3 can be accommodated for as modern batteries are often capable of recombining the oxygen produced from overcharging bubbles up to this rate (Linden, 2010).

To improve speed and efficiency of charging, a multi-stage charging process can be used whereby if the state of charge is being monitored, the voltage and current supply is varied at key points across the different states of charge. Typically this means 14.4 volts during the fast charging phase and 13.8 volts during the float charging phase because at 13.8 volts, even a full battery struggles to produce oxygen, and even around 14.4V the overcharge rate is about C/100. (Power Stream, n.d.)

The SOC can be either derived directly from generic or manufacturer specific tables such as in the graphs below, or more accurately through either methods. See section State of Charge Measurement for more information on SOC measurement.

Ripple Voltage

Ripple voltage also causes a problem with large stationary batteries. A voltage peak constitutes an overcharge, causing hydrogen evolution, while the valley induces a brief discharge that creates a starved state resulting in electrolyte depletion. Manufacturers limit the ripple on the charge voltage to 5 percent. (Battery University, n.d.)

Current Sensors

Hall Sensors

One of the most accurate and popular way of measuring current in an electrical circuit is through using Hall sensors. However these are relatively expensive and bulky and that level of accuracy is not necessary for our prototype.

Shunt Resistors

It is cheaper to simply measure the voltage drop across a fixed known resistor, known as a shunt resistor, and derive the current accordingly. To minimise the impact on the actual power supply to the rest of the circuit we ideally want minimal resistance. As with the voltage measurement, precautions need to be taken to ensure that the voltage measured isn’t beyond 3.3V. An IC such as the ZXCT1009 can be implemented to support this, as long as the currents measured are within the safe limits for it.

Temperature Sensors

As a general rule for lead acid batteries, the charge voltage should be reduced by 3mV per cell (so about 12mV for the 12V battery) for every degree above room temperature (assuming 25°C should be reasonable) and reduce by the same amount for every degree below room temperature (Battery University, n.d.) so even if the temperature is not included in state of charge calculations it still needs to be monitored for safety purposes. It is also recommended to lower the float voltage if the temperature goes above 29°C.

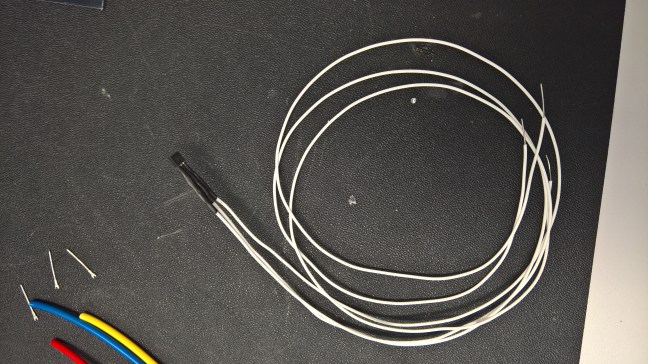

We are using the DS18B20 temperature sensor held near the battery to monitor this as it simply a one wire serial (12 bit) communication that is easily implemented into the code. It takes a supply voltage of 3.0V to 5.5V DC and has a maximum current usage of 4mA but can also run off parasitic currents to power itself. Its measuring temperature range is -55°C to +125°C which safely more than covers a range that the battery may be used in. Its accuracy is ±0.5°C (from -10°C to +85°C) which should be sufficient for our application.

We are operating the sensor in parasitic power mode by tying Vdd to ground to minimise our power losses.

References

Battery University. (n.d.). Charging The Lead Acid Battery. Retrieved February 2016, from http://batteryuniversity.com/learn/article/charging_the_lead_acid_battery

Linden, D. (2010). Linden’s Handbook of Batteries.

Particle Core Official Website. (n.d.). Retrieved February 2016, from https://www.particle.io/

Power Stream. (n.d.). SLA. Retrieved February 2016, from http://www.powerstream.com/SLA.htm

Power Stream. (n.d.). SLA Fast Charging. Retrieved February 2016, from http://www.powerstream.com/SLA-fast-charge.htm

Simon, D. (2001). Kalman Filters. In Embedded Systems Programming (pp. 72 – 79).

Wikipedia. (n.d.). Kalman Filter. Retrieved March 2016, from https://en.wikipedia.org/wiki/Kalman_filter

Yeau-Chang, W. (2013). The State of Charge Estimating Methods for Battery: A Review.

Next: State of Charge Measurement

This is a sub-section of my posts describing the Sustainable Energy Design 3 course group project to Design and Build a Micro Wind Turbine – for more information see here